Interactive Visual Interfaces

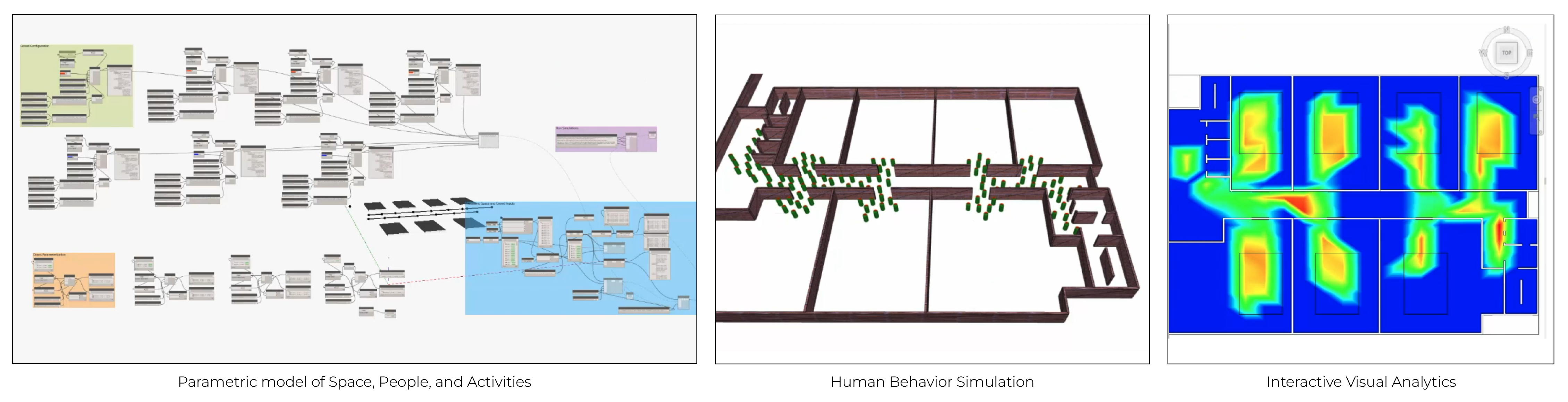

At IPL, we couple human behavior simulation with interactive visual interfaces to support the dynamic exploration of human-environment interactions. Our goal is to support the decision-making process of architects, planners, engineers, and building managers, both during building design and operations phases. A key aspect of this approach involves coupling parametric modeling of buildings and occupants to integrate human behavior simulation in architectural design workflows using an intuitive visual scripting approach accessible to desires without coding experience.

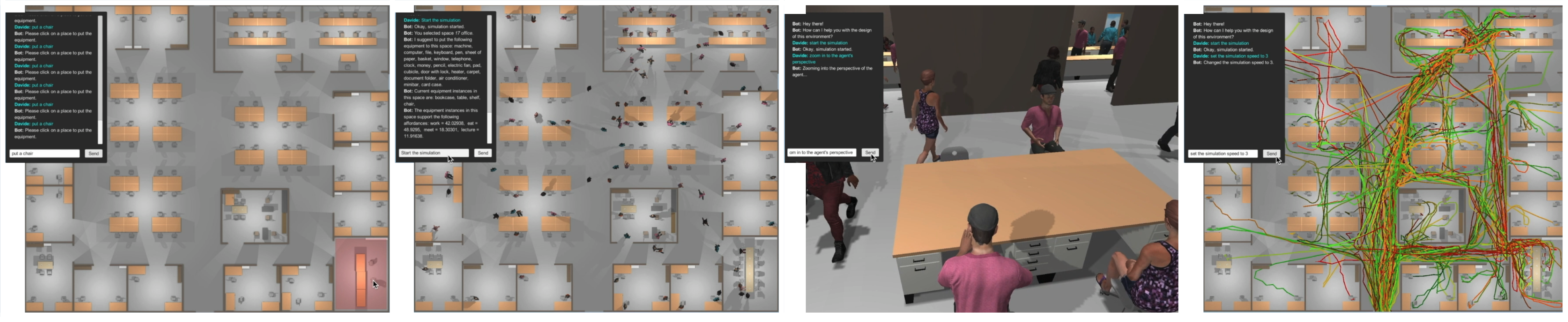

Interfaces for human-building interaction simulations can include natural language processing to guide the design process and facilitate the simulation of occupants' behavior. Natural language processing interface can rely on multipmodal Large Language Models (LLMs) such as GPT-4 developed by Open AI, or other knowledge-based systems capturing human-building interactions using ontologies.

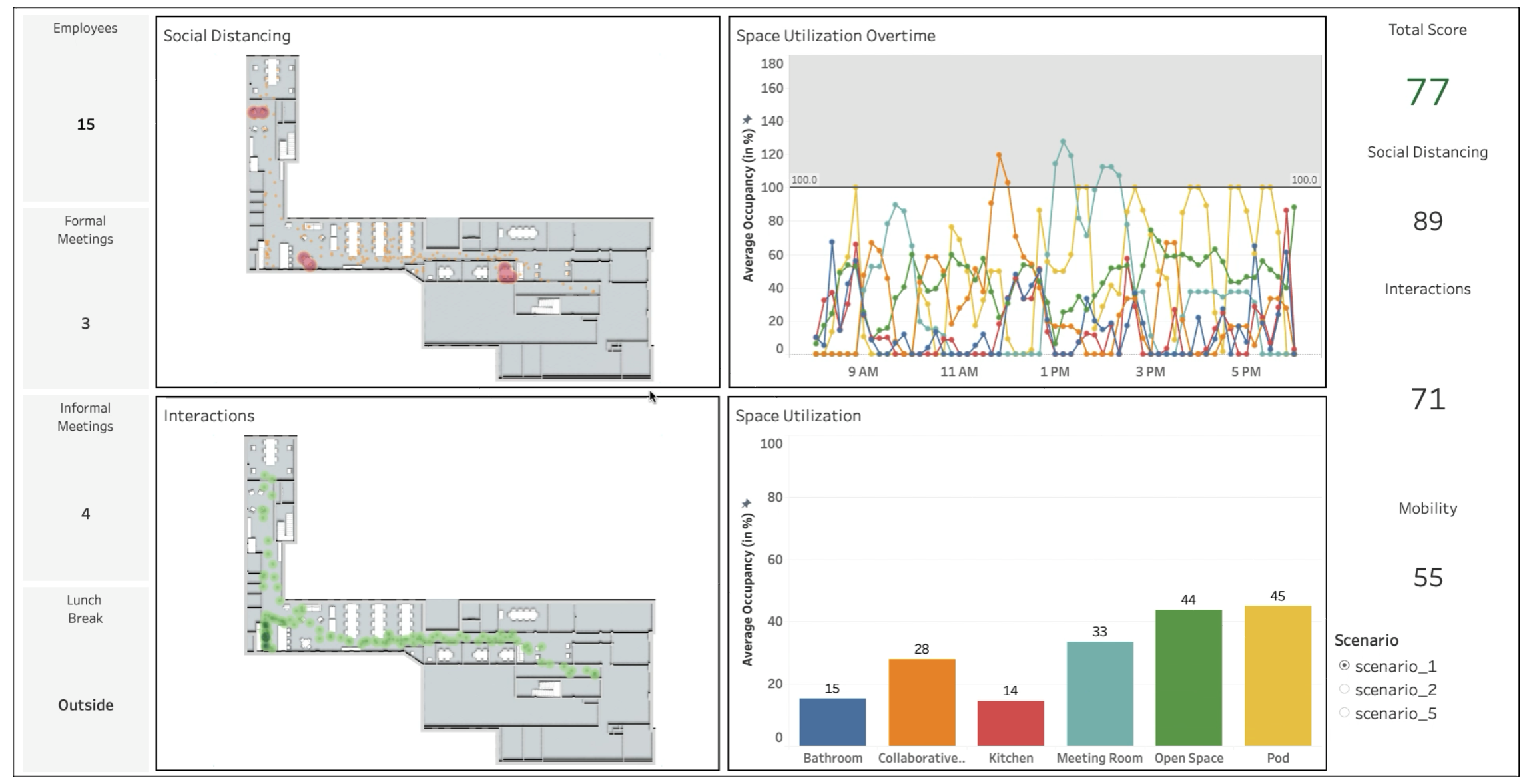

A vital requirement of interactive visual interfaces is to efficiently and effectively communicate the set up and outcomes of human behavior simulations to different stakeholders with diversified background, including architects, engineers, planners, end-users, and building managers. Such an interface should also enable comparative evaluation of alternative scenarios involving different simulation inputs in the form of alternative building design or use scenarios assumptions.

Media

Publications

- Haworth B., Usman M., Schaumann D., Chakraborty N., Berseth G., Faloutsos P., Kapadia M. 2020. “Gamification of Crowd-Driven Environment Design”. Computer Graphics and Applications. Vol. 41 No. 4, pp. 107-117

- Berseth G., Haworth B., Usman M., Schaumann D., Khayatkhoei M., Kapadia M., Faloutsos P. 2019. “Interactive Architectural Design with Diverse Solution Exploration”. IEEE Transactions on Visualization and Computer Graphics. Vol. 27 No. 1, pp. 111-124

- Azizi V., Patel S., Usman M., Schaumann D., Faloutsos P., Kapadia M.. 2020. “Floorplan Embedding with Latent Semantics and Human Behavior Annotations”. Symposium on Simulation for Architecture and Urban Design (SimAUD).

- Usman M., Schaumann D., Haworth B., Kapadia M., Faloutsos P.. 2019. “Joint Exploration and Analysis of High- Dimensional Design–Occupancy Templates”. Proceedings of the 12th Annual International ACM Conference on Motion, Interaction, and Games (MIG).

- Zhang X., Schaumann D., Faloutsos P., Kapadia M. 2019. “Knowledge-Powered Inference of Crowd Behaviors in Semantically Rich Environments”. Proceedings of the 15th AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (AIIDE). Atlanta, GA, USA.

- Usman M., Schaumann D., Haworth B., Kapadia M., Faloutsos P.. 2019. “Joint Parametric Modeling of Buildings and Crowds for Human-Centric Simulation and Analysis”. Proceedings of the Computer-Aided Architectural Design Futures. Daejong, Korea.

- Usman M., Schaumann D., Haworth B., Berseth G., Kapadia M., Faloutsos P.. 2018. “Interactive Spatial Analytics for Human- Aware Building Design”. Proceedings of the 11th Annual International ACM Conference on Motion, Interaction, and Games (MIG). Cyprus.

- Gath-Morad M., Zinger M. , Schaumann D., Putievsky Pilosof N., Kalay Y.E. 2018. “A Dashboard Model to Support Spatio-Temporal Analysis of Simulated Human Behavior in Future Built Environments”. Symposium on Simulation for Architecture and Urban Design (SimAUD). Delft, Netherlands.

- Schaumann D., Date K., Kalay Y.. 2017. “An Event Modeling Language (EML) to simulate use patterns in built environments”. Symposium on Simulation for Architecture and Urban Design (SimAUD). Toronto, Canada.